Americans have become familiar with a recurring script: A killer lurks in our food supply, frightening in its ubiquity and toxicity. Experts agree that it is scientifically proven to be dangerous. If we rout this scourge, thousands of lives will be saved!

Over the years various substances have played the villain in this script, including saturated fats, high-fructose corn syrup, alar, red food dye, and trans fats. The newest member of the club is salt.

Common table salt—sodium chloride—is facing fresh scrutiny from public-health practitioners, policy makers, and activist groups for its possible contribution to high blood pressure and stroke. In 2008 New York City’s then–Health Commissioner Thomas Frieden met with food manufacturers to request voluntary reductions in the salt content of foods. Frieden, now head of the Centers for Disease Control and Prevention, told the New York Times in 2009, “If there’s not progress in a few years, we’ll have to consider other options, like legislation.” Across the pond the British Food Standards Agency set salt-reduction targets for 2012 that its spokesperson admits are “challenging.” Companies like Unilever and Campbell Soup Company have announced comprehensive salt-reduction plans, while firms like Nabisco and PepsiCo have strategically reduced salt in some brands.

A population-wide reduction in salt intake could turn out to be a positive development for public health. But the intertwined stories of saturated fat and trans fat offer a warning for the future of salt: dangerous “improvements” can turn out to be the most insidious nutritional villains.

Previous generations of nutritional scientists and chefs from around the world celebrated fatty foods for their ample calories, vitamins, micronutrients, and essential fatty acids. But after World War II, with fewer people suffering from nutritional deficiencies or dying of infectious diseases, American scientists and nutrition reformers began to study the role of dietary fats in heart disease and cancer. The lipid hypothesis, which suggests saturated fat in the diet produces serum cholesterol in the blood that leads to heart disease, was the subject of considerable controversy in the 1960s, 1970s, and 1980s. For example, the 1977 report Dietary Goals for the United States, prepared by the Senate Select Committee on Nutrition and Human Needs, was arguably the first non-wartime government document to recommend Americans eat less of anything—namely cholesterol and saturated fat. Meat, egg, and dairy producers were not pleased.

Soon thereafter, three National Academies of Science commissions struggled with the uncertain relationship between dietary fat and disease when preparing reports on diet and health in the early 1980s. By 1984 an American Heart Association commission concluded, “Although it is not definitively established that the advocated alterations in diet will actually reduce the incidence of [coronary heart disease] . . . it is imprudent to wait indefinitely for proofs of efficacy in the face of the high incidence of coronary heart disease.”

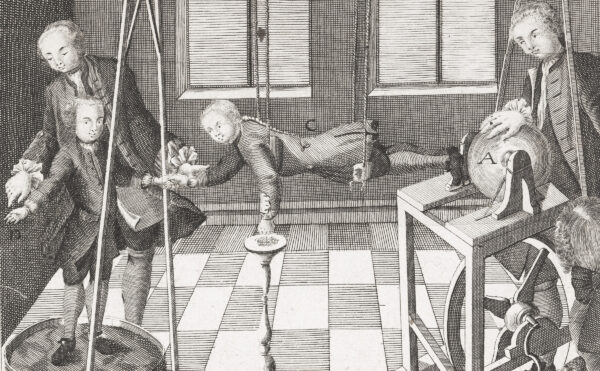

The lipid hypothesis became increasingly established in research agendas, funding streams, school curricula, and federal food programs in the 1980s. Activist groups also increasingly targeted food corporations for using saturated fat. Phil Sokolof, an Omaha cholesterol crusader, took out full-page national newspaper advertisements in the late 1980s asking, “Who is poisoning America? Food processors are by using saturated fats! . . . YOUR LIFE MAY BE AT STAKE.” Sokolof accompanied his indignant messages with photos of killer products like Goldfish crackers, Oreos, and Fig Newtons.

But what could food companies use to replace saturated fats? In the 1980s the Center for Science in the Public Interest (CSPI) routinely praised food manufacturers for switching from saturated fats to partially hydrogenated vegetable oils, which contain trans fat. CSPI described Burger King’s switch to vegetable oils for its Chicken Tenders as “a boon to Americans’ arteries.” Sokolof likewise praised Burger King’s switch in his newspaper ads, and CSPI publicly expressed hope that other chains would follow suit.

Indeed, nearly every chain restaurant and packaged-food manufacturer eventually replaced trans fats with partially hydrogenated soybean oil. This was not only because of pressure from activist groups. Partially hydrogenated oils also offer many functional advantages in frying, baking, food formulation, and shelf life. They are highly customizable for different manufacturing applications. Unlike palm or coconut oils, partially hydrogenated soybean oil is free of saturated fats and made in the USA. It’s also vegetarian and pareve—kosher with both meat and milk.

But in 1990 the New England Journal of Medicine published a clinical study from the Netherlands arguing that trans fats were probably worse for human health than saturated fats because they both raise “bad” LDL cholesterol and lower “good” HDL cholesterol. That research was confirmed in 1994 by industry-sponsored USDA research and by epidemiological findings from the Nurses’ Health Study. That same year, CSPI petitioned the FDA to add trans fats to nutrition labels on packaged foods.

Almost immediately seed companies and trade associations began to develop trans fat alternatives. Scores of manufacturers have replaced trans fats since the FDA announced labeling in 2003. Many are using soybean oils that do not require partial hydrogenation because they are crushed from beans bred to minimize linolenic acid, therefore slowing oxidation and raising their smoke point in finished products. Some companies are using sunflower oils bred for higher oleic acid, which offer similar manufacturing advantages. For baking many manufacturers have returned to palm oil, despite its saturated fat content. But many critics have consistently pointed out that a causal relationship between saturated fats and heart disease has never been proven, either clinically or epidemiologically. Walter Willett, probably America’s most important epidemiologist, has argued that the lipid hypothesis, long dominant in American nutrition research, “was overly simplistic” because diet affects heart disease through many biological pathways beside total serum cholesterol or LDL cholesterol. Willett, a lead researcher on the Nurses’ Health Study, publicly lamented the replacement of saturated fat with trans fats, telling the New York Times that a lot of doctors “had made their careers telling people to eat margarine instead of butter. . . . When I was a physician in the 1980s, that’s what I was telling people to do, and unfortunately we were often sending them to their graves prematurely.”

Will salt replacements prove similarly chastening? Salt, found in nearly every recipe and food product, contributes texture and structure in baked goods, controls spoilage in packaged foods, and regulates fermentation in pickled items. It maintains color and emulsification in everything from salami to sorbet.

Several supply companies have developed salt alternatives, usually based on salty-tasting potassium chloride blended with agents to mask its unpleasantly metallic flavor. But potassium chloride could be toxic to people with kidney disease who are advised to avoid both sodium chloride and potassium, raising questions about the need for new labeling rules. Some food technologists have reduced salt content by using specifically shaped or positioned salt crystals. Others have proposed replacing salt with hydrolyzed vegetable protein, yeast extracts, and other sources of glutamates and aspartic acid. But none of those products can replace salt’s functional attributes in baked, frozen, fermented, emulsified, and other processed foods.

Individuals are often frustrated when nutritional villains are acquitted. Woody Allen’s character in the film Sleeper wakes from a 200-year coma in the year 2173 and requests something called wheat germ, along with organic honey and tiger’s milk for breakfast. The attending doctor is baffled, but a colleague with some knowledge of medical history informs her that those were the “charmed substances” once thought to contain life-preserving properties. “You mean there was no deep fat?” she asks incredulously.“ No steak or cream pies or hot fudge?” Her colleague responds with a bemused shrug. “Those were thought to be unhealthy. Precisely the opposite of what we now know to be true.”

We are not likely to find out, as Allen’s character did, that smoking is healthful, too. But when reengineering food on a broad scale, we should constantly be aware that we might be totally wrong or that the solution might be worse than the problem. Consumers are advised to take horror stories about food with a grain of salt.